NOVA | Virtual Production

An ICVFX virtual production project combining LED wall, Unreal Engine, motion capture, 3D scanning, and AI-driven pre-visualization.

Overview

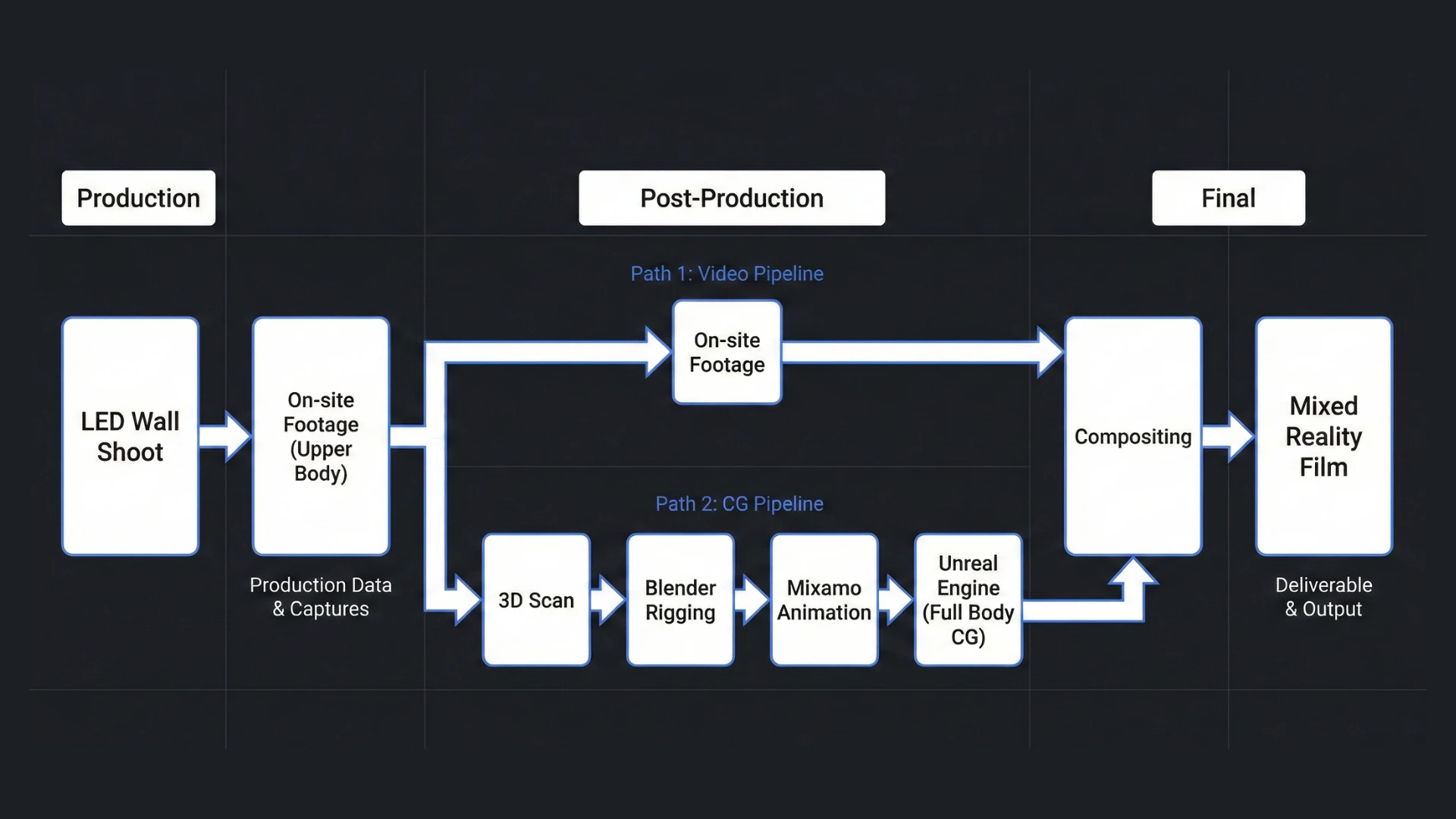

NOVA is an ICVFX (In-Camera VFX) virtual production project shot on an LED volume stage. The film blends live-action footage captured on the LED wall with fully CG characters rendered in Unreal Engine. Live-action shots primarily captured upper-body performances of real actors, while full-body and wide shots used 3D-scanned digital doubles placed directly in-engine. This interplay between physical and virtual is the core of the project’s mixed reality approach.

In this project, I served as the bridge between technical infrastructure and creative vision. My role involved:

- System Integration: Configuring and calibrating the on-site workflow involving OptiTrack mocap and LED wall within Unreal Engine.

- Environment & Cinematics: Designing immersive 3D environments and directing the cinematic sequence inside Unreal Engine.

- Technical Art: Developing custom HLSL shaders and Niagara VFX to optimize visual fidelity for real-time performance.

- Asset Pipeline: Handling character rigging in Blender and ensuring seamless data transfer into the Unreal pipeline.

Pre-Production

Concept & Story

NOVA is set in a dystopian world where the protagonist searches for the location of a final time capsule vessel, journeying through a technologically advanced laboratory. Wide-angle shots oscillate between realist imagery and dream-like sequences.

The narrative follows a lone traveler who lands on a fog-covered coastline and makes her way into a coastal research station. Inside, she discovers a massive bio-organic vault chamber housing the Time Vessel, a capsule that bridges physical and virtual realities. As she attempts to fix a broken causality in the timeline, the vault erupts into gravitational storms of fractal light and quantum code. Through fragments of data and holographic memories, she must decide whether restoring the timeline means erasing everything built upon it.

“Every memory I stored here built a universe that doesn’t need me. No one is authorized to rewrite your origin.”

The story explores themes of time, memory, and the boundaries between physical and digital existence, brought to life through the convergence of virtual production, real-time rendering, and mixed reality filmmaking.

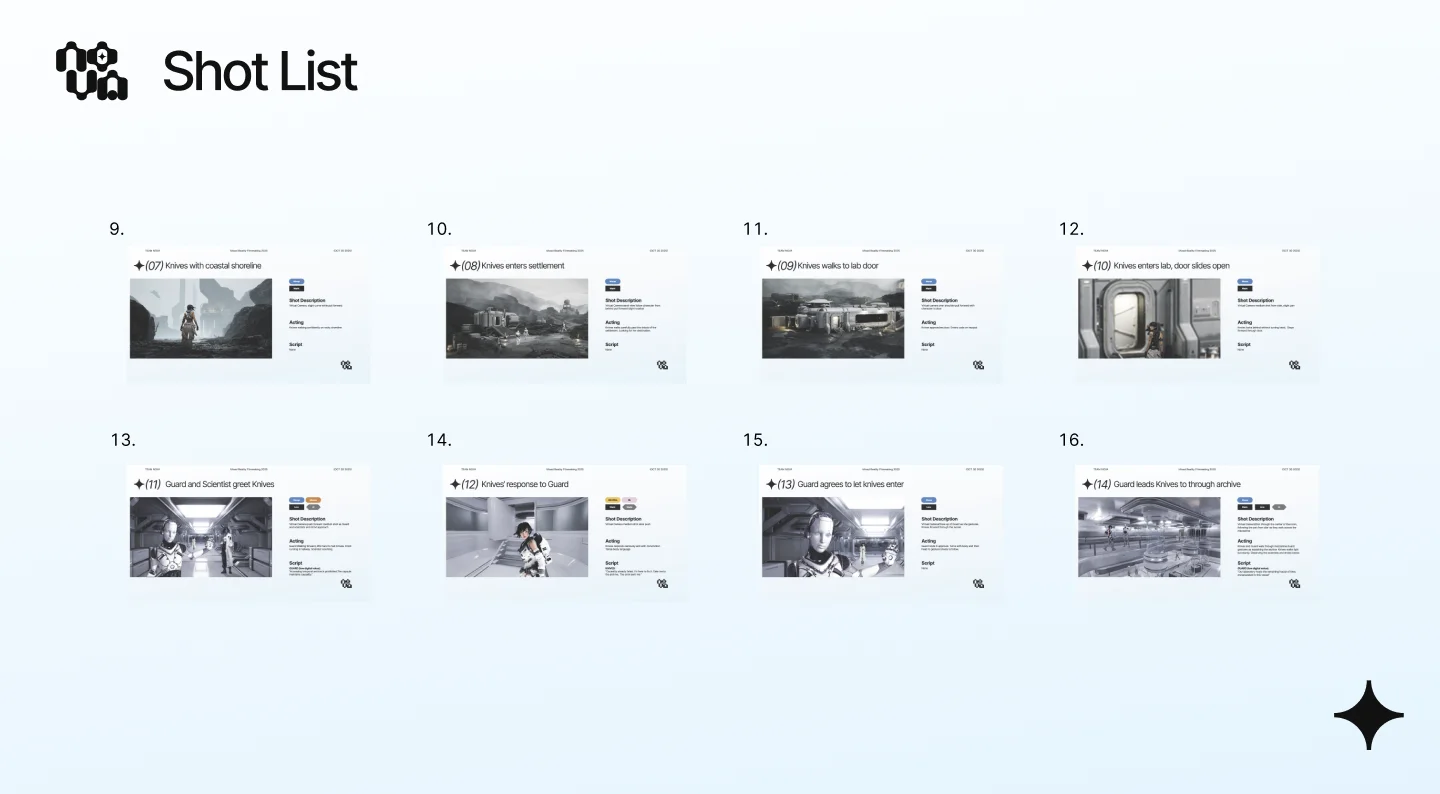

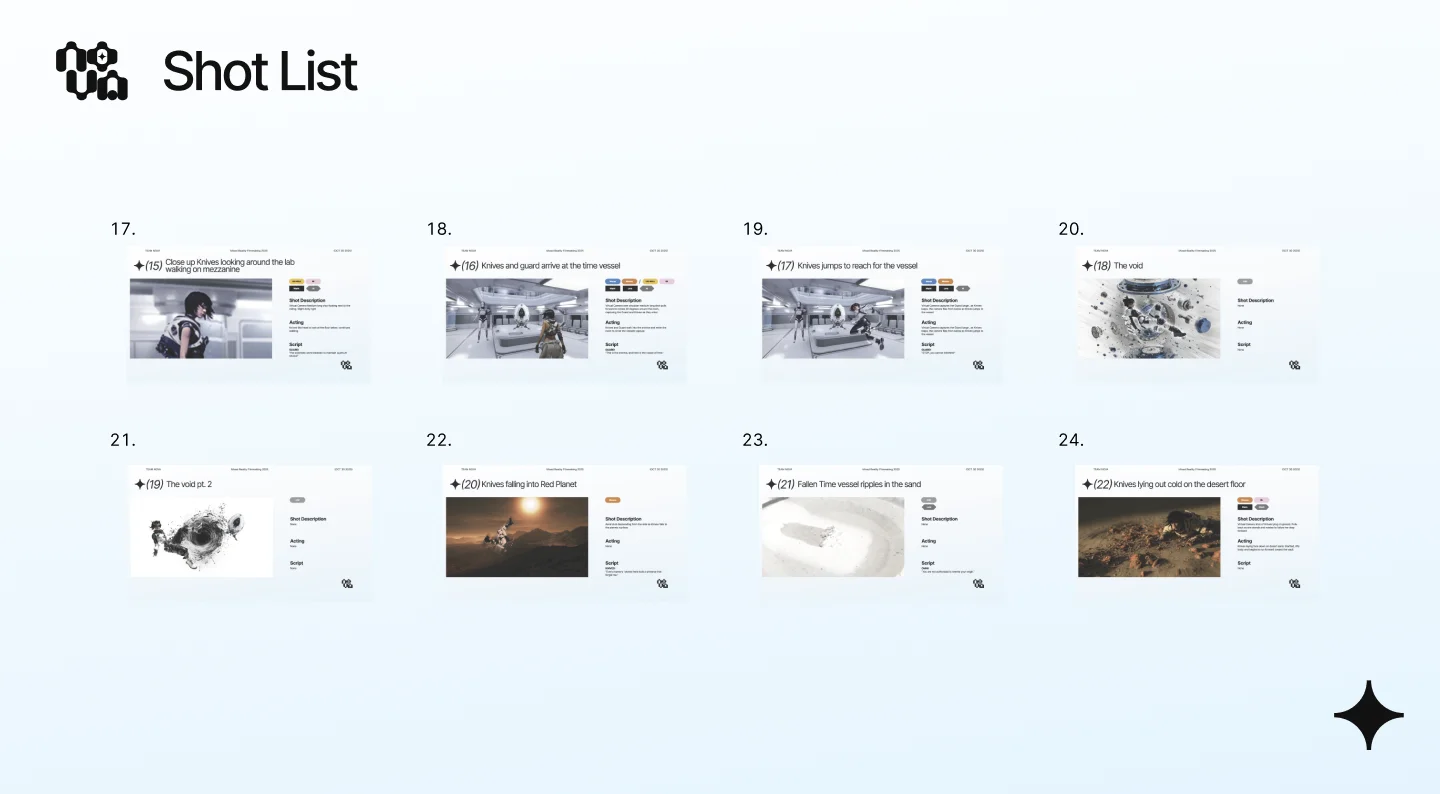

AI-Driven Pre-Visualization

Leveraged Midjourney to transform a 30+ shot list from a static concept shot list into high-fidelity visual prototypes, significantly enhancing pre-visualization and team communication.

Using Midjourney to generate visual prototypes from shot descriptions

Production

On-site LED Wall Shooting

On-site LED volume filming

Live-action footage captured on the LED wall

Post-Production

Virtual Cinematography & Compositing

The live-action footage on the LED wall primarily captured upper-body performances. For full-body shots, we used the 3D-scanned characters placed directly inside Unreal Engine. By interweaving real actors filmed on the LED volume with their digital doubles rendered in-engine, we created a seamless blend of physical and virtual, achieving a true mixed reality filmmaking approach.

Composed Shots: LED Wall + Full CG

The on-site footage captured on the LED wall was composited with fully CG characters rendered in Unreal Engine. Since the LED wall could only capture upper-body performances, full-body shots relied on 3D-scanned digital doubles placed directly in-engine. This compositing approach is at the heart of how we created a mixed reality film.

Composed shots: live-action LED wall footage intercut with full CG rendering

3D Scan & Rigging

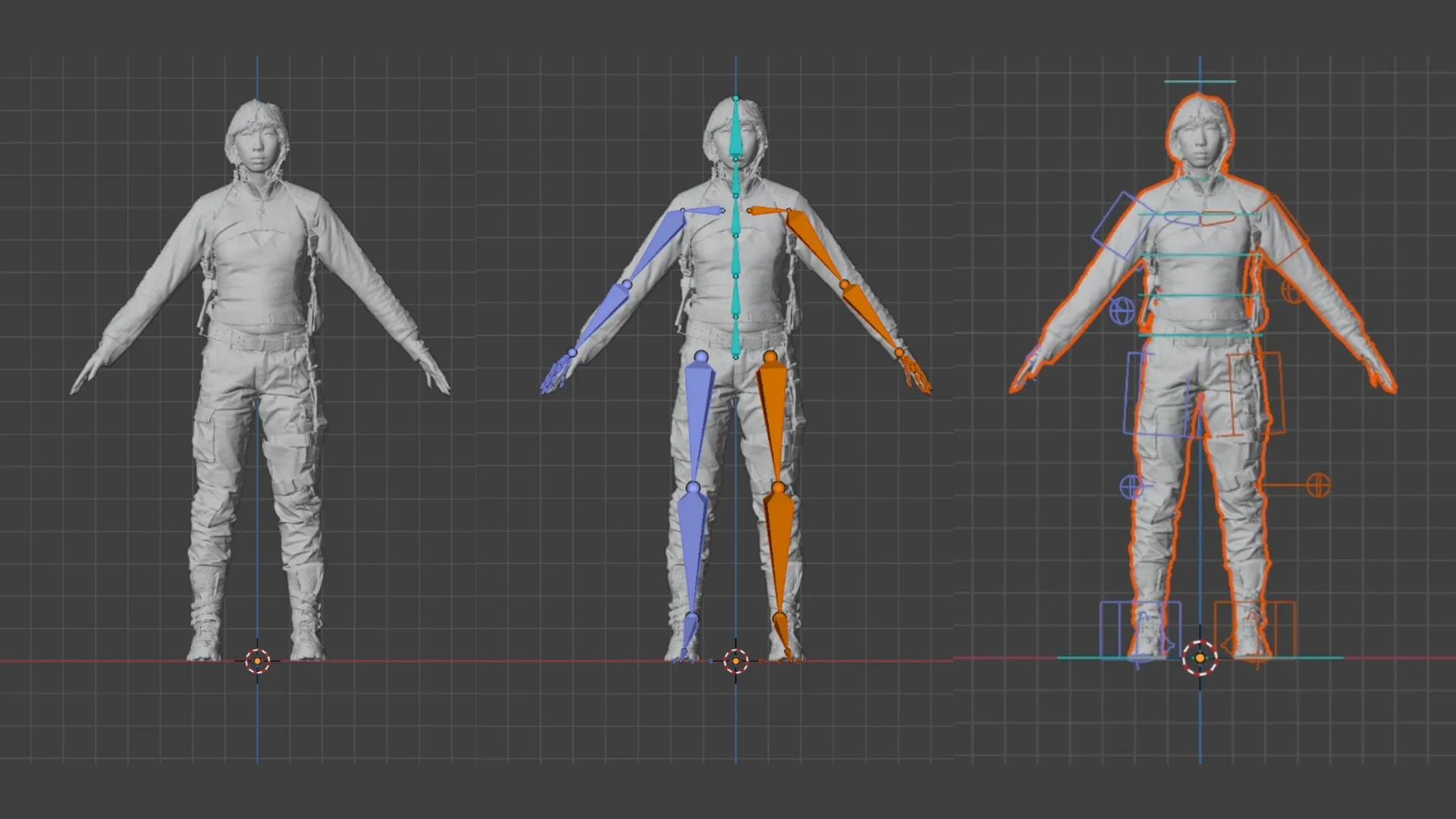

To overcome the framing constraints of the LED wall and physical floor, we scanned the actors and rigged 3D avatars in Blender. This allowed for full-body integration and realistic ground interaction that would otherwise be impossible in a standard ICVFX setup.

Motion Capture & Animation

To bring the digital doubles to life with natural movement, I established a custom animation pipeline. We recorded high-fidelity motion data using the OptiTrack Motion Capture system and integrated it with Mixamo and Blender for efficient rigging and retargeting, ensuring vivid and realistic character performances within the virtual environment.

Applying Mixamo animations to 3D scanned characters

Motion capture recording for custom animation

Technical Art: Niagara VFX

Helix Formation

Using the math equation of a helix, particles are procedurally positioned in Niagara to form a spiral path. The setup translates math functions into motion, allowing dynamic control of radius, turns, and height for generative particle effects.

Fractal Tree (Custom HLSL)

Built a GPU Niagara module with Custom HLSL to grow a branching fractal as an iterative, stack-style system that applies per-branch rotation, scale, length decay, and hub-noise jitter. Exposed controls for depth, spread, and randomness enable real-time, procedural tree variations.

Helix particle formation

Fractal tree generation

Particle Ending Sequence

Ending sequence built with Niagara particle effects (rendered in Unreal Engine)

System Integration & Technology

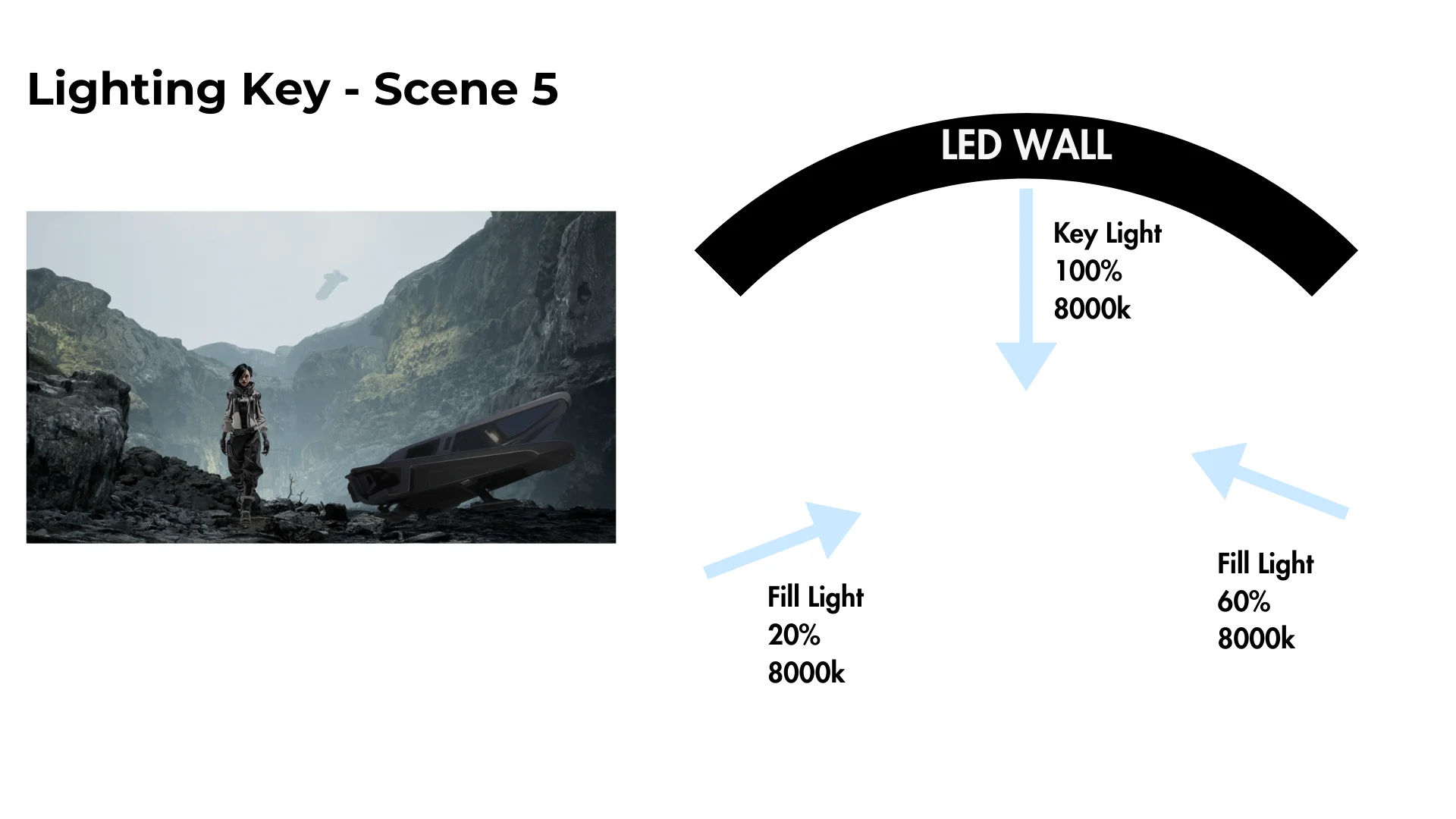

Lighting & Practical Effect

To maximize efficiency under hardware constraints, we implemented a meticulous Pre-lighting Strategy. By mapping out the light intensity, color temperature, and positioning for every single shot in advance, we ensured that our limited resources were utilized to their full potential. Beyond digital lighting, we also incorporated practical effects such as smoke machines and fans to bridge the gap between virtual environments and physical reality. The essence of Virtual Production lies in the seamless integration of physical and digital elements; without accurate on-set lighting and tangible atmospheric effects, even the most stunning virtual environments lose their realism.

Lighting plan for each scene

Practical effects: smoke machine and fan

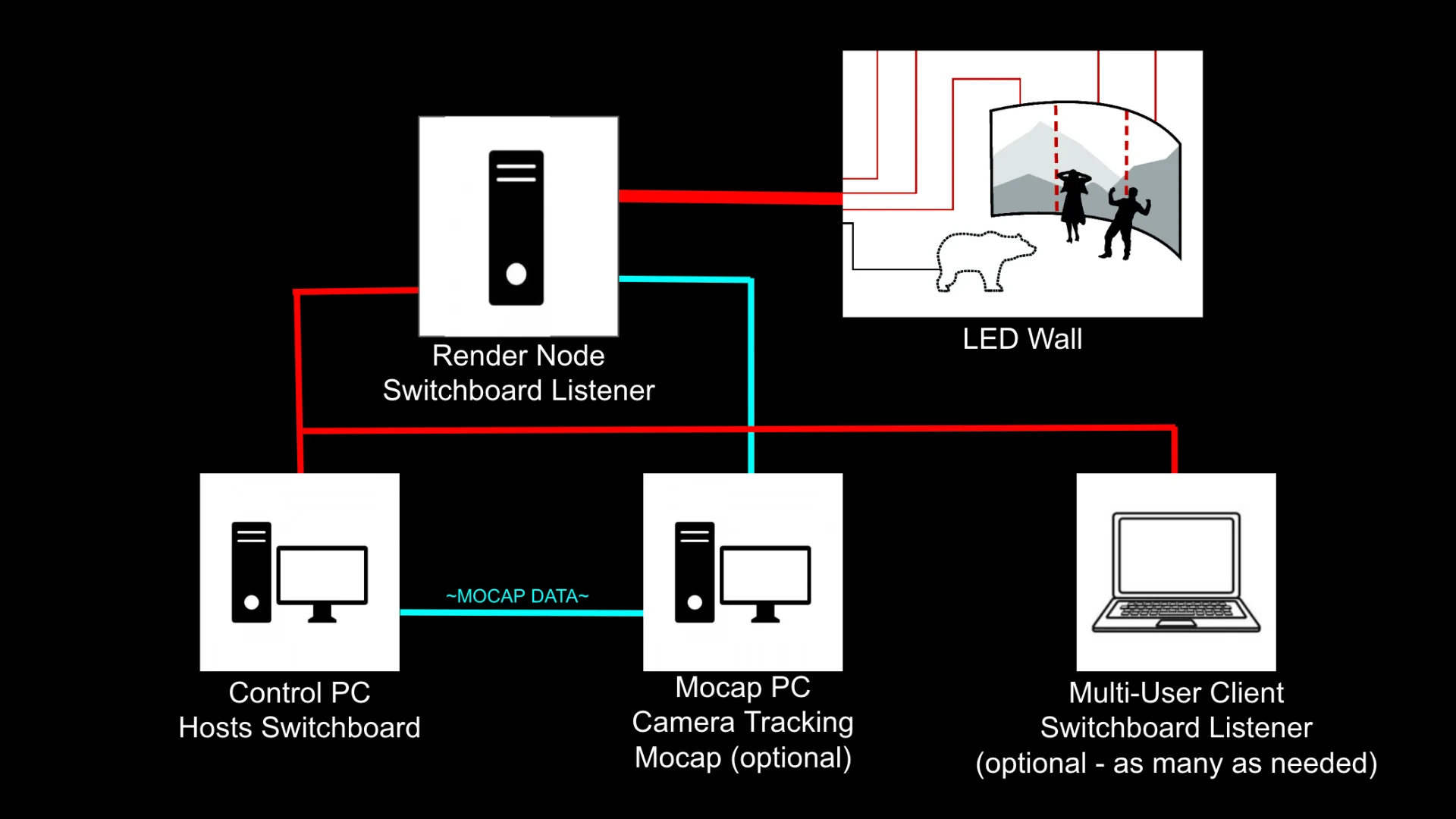

System Architecture

The virtual production pipeline required multiple systems working in sync: a Render Node running nDisplay to project Unreal Engine content onto the LED wall, a Control PC hosting Switchboard to manage render nodes, and a Mocap PC handling OptiTrack camera tracking data.

OptiTrack camera tracking with Blackmagic camera on the LED volume stage

System Connection

Render Node

- Switchboard Listener

- Drives LED wall via nDisplay

Control PC

- Hosts Switchboard

- Manages all render nodes

Mocap PC

- OptiTrack camera tracking

- Sends tracking data to render node

Behind the Scenes

On-set

Floor setup with sandbags and platforms to raise actor height, solving the bottom seam issue during filming

On-set production documentation

Other Production Process

Blender rigging process

Niagara system tree process

Tree with particle effects

Motion capture recording

Production Team

| Name | Role |

|---|---|

| Ava Kling | Creative Director, Compositor, Unreal Artist (Environment & Cinematics) |

| Dazai Chen | Technical Director, Technical Artist, Unreal Artist (Environment & Cinematics) |

| Moira Zhang | Sound Director, Original Score Composer |

| Nini Li | C4D Artist |

| Woo Young Kim | Principal Designer, 2D Animator, Cinematographer |

Virtual Production Project, 2025

Get in Touch

Have questions or want to collaborate? Feel free to reach out!