Spatial Memory: NYU IDM Thesis

中A VR spatial memory album. Step into 3DGS captures of personally meaningful places, layered with designed light, ambient sound, and personal narration.

What it is

A VR experience on Meta Quest 3 that holds eight 3DGS-captured places from my life, each layered with designed light, ambient sound, and a voiceover sharing what the place means. The viewer browses the archive as a curved arc of photo cards, picks one, and the carousel dissolves into the captured space around them. They are no longer looking at a memory, they are standing inside one, with my voice next to them telling them why this place matters.

Why I made it

I am a stage lighting designer. I perceive the world through space: how light fills a room, how a place feels when you stand in it. When I remember someone, I do not always remember the conversation, but I remember the scene, the light, the feeling of being there. Photos and videos record what a place looked like, but they always felt like they were missing something, the sense of actually being surrounded by it.

3D Gaussian Splatting can preserve a real space, atmosphere and light included. VR places someone physically inside it. Together they reach where flat media could not. But during Demo Day a viewer kept asking, while still inside the headset, “where is this? why this place?” Presence alone produces a room. What turns it into a memory is everything we do not usually think of as part of a place but carry in every memory of one.

The shift: preserve to share

The thesis began as a question about preservation. By the end it had moved to sharing. One captured space is a tech demo. Multiple captured spaces, layered with the elements that make them feel inhabited (light, sound, voice), become a medium. The question shifted from “can you revisit a memory?” to “can someone else step into yours, and feel what it meant?”

The eight spaces

The archive holds eight curated places, each chosen for what it carries rather than for visual interest.

These are not interchangeable backdrops. Each is selected because it holds a specific relationship to my life that no other space could replace.

The personal layer

Each space gets three design elements layered onto the 3DGS capture. Together, they are the design language of spatial memory: the elements we do not usually think of as part of a place but carry in every memory of one.

Designed light

3DGS captures freeze the lighting at the moment of capture. To match how the place is remembered, I rebuilt the light field inside Unreal Engine: directional lights for sun, sphere lights for fill, spotlights for accents. The Okinawa beach gets warm, low-angle sunset light. The temple courtyard gets the soft afternoon haze of incense smoke. This is the part of the design that draws directly on a decade of stage lighting practice.

Ambient sound

3DGS captures are silent. Each space gets ambient audio that restores what the freeze took away: waves at the beach, distant traffic in the city, the quiet of a room at night. Light and sound rebuild the atmosphere of the remembered place.

Personal narration

A voiceover in each space, recorded with ElevenLabs from scripts I wrote. The tone is closer to standing next to someone and showing them a place you care about, rather than a museum guide.

“When I see a sunset, I always raise my hands to catch the light, watching the orange and pink colors flow on my skin.” — narration in the Okinawa scene

Each narration takes a different approach depending on what the space carries. At Nankunshen Temple it becomes interactive: VR cannot hold incense, so the viewer is invited to put their palms together and introduce themselves to the Jade Emperor, following the same prayer ritual my family has practiced for generations.

In the Brooklyn apartment the narration is quieter. It points the viewer toward the table where photographs of past meals are placed, because looking back through old photos, most of my memories of that apartment turned out to be meals I had at that table.

The experience

Four moments, in order.

1. Welcome. A text panel appears in the dark, my voice introducing the experience. “Hello, welcome. I’m Dazai. I want to take you into some of the places that mattered to me. This is my spatial memory album.”

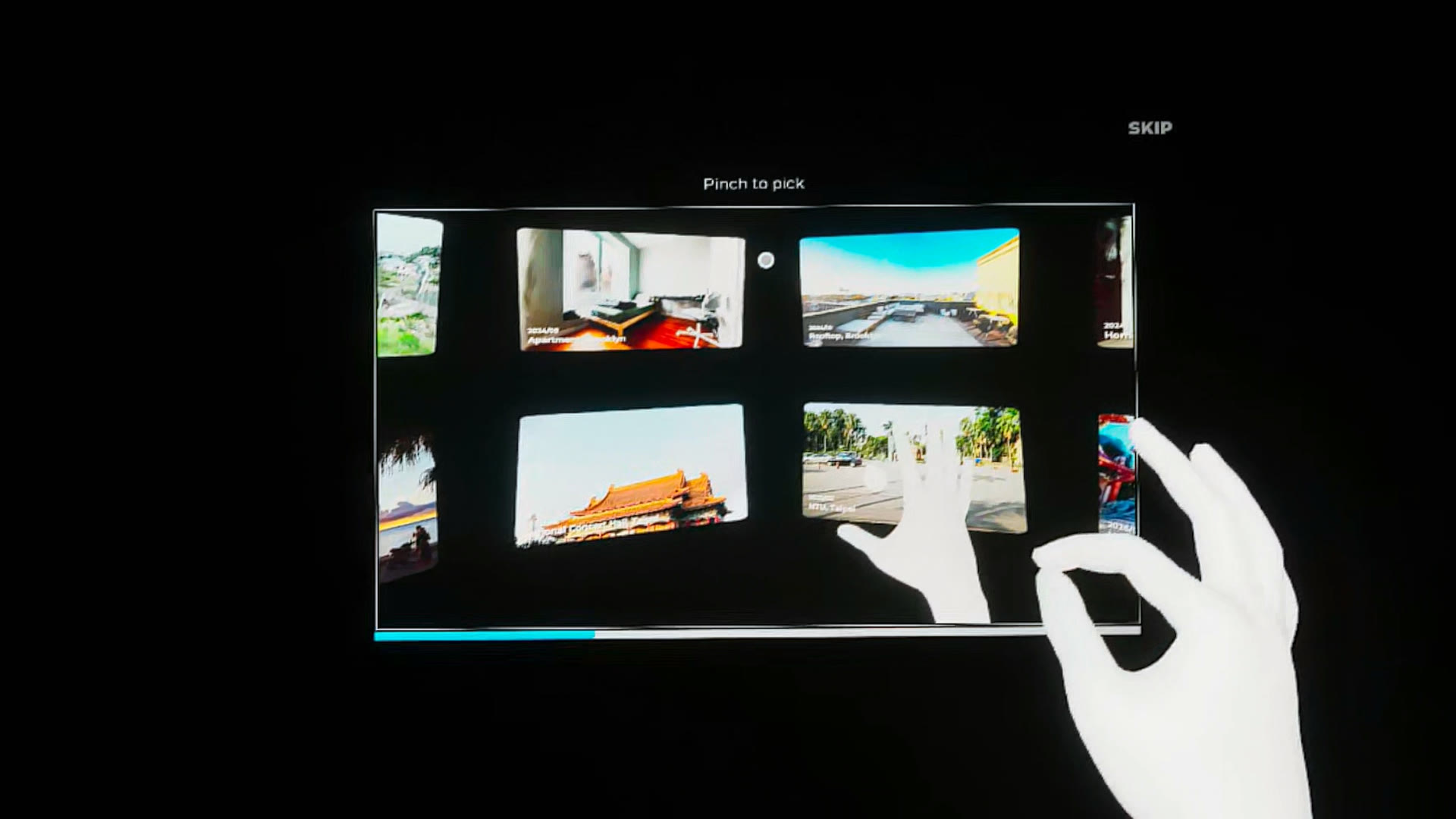

2. Demo video. A single guided video plays inside VR, showing the full interaction flow as a continuous sequence: hover a card, pinch to select, enter the space, open the palm menu, return to the album. This replaces the original four-step gesture-by-gesture tutorial that felt fragmented to first-time VR users at Demo Day.

3. Carousel. Eight photo cards on a curved arc. Hover and pinch to enter.

4. Memory. The carousel dissolves. A 3DGS space loads around you. The light, the sound, my voice. You stand in the place.

How it was built

Capture. iPhone video, Insta360 X5 360-degree video, or single photographs. Frames extracted, aligned in RealityScan, trained as 3DGS in PostShot. For older memories where I only had a single photo, I used Sharp (Mescheder et al., 2025) to generate a 3DGS scene from one image.

Runtime. Unreal Engine 5 + a custom open-source plugin I wrote (SplatRenderer) for rendering 3D and 4D Gaussian Splatting in real time on Quest 3. Interaction logic in TypeScript via PuerTS; Blueprint handles gesture detection.

Hand tracking. Meta Interaction SDK on Quest 3. Pinch to select, palm menu summoned by raising the left hand and facing the palm toward the face.

What I learned

Captured space is a canvas, not a finished work. The capture preserves geometry, light, and atmosphere with remarkable fidelity, and VR places someone physically inside it. But a stranger standing inside a faithfully captured room does not feel what the person who lived there felt. They feel a room. What turns it into a place is the personal layer: light tuned to memory, ambient sound, a voice sharing why this place matters, and curation that selects spaces for personal significance rather than visual interest.

The medium itself echoes this. 3D Gaussians are translucent, soft, not fixed. Up close they reveal an almost smoke-like quality. Edges dissolve. That imperfection turned out to be right. Memory is not fixed either; it shifts over time, changes with experience. The medium and what it carries share the same quality.

At the showcase

For the IDM Showcase booth (May 7, 2026), I built a 16:9 attract-loop poster that runs alongside the live VR build. It cycles between a hero title and a scrollable walk-through of the viewer’s journey, with QR codes for visitors to scan.

(Best viewed full screen. Press F to toggle full-screen, arrow keys to step between states.)

Process & documentation

This page is the project profile. The process behind it lives in the sub-pages below.

- Concept Statement — original framing

- Weekly Log — semester-long process documentation

- Tech Dev — system architecture and implementation notes

- Class Notes — including post-Demo Day pivot

NYU IDM MS Thesis, Spring 2026. Demo Day March 11. Final paper March 29.

Recent Updates

Week 10: Narration, Onboarding v2 & Final Demo

Apr 5Recording narration stories for 8 spaces, rebuilding onboarding as single demo video with synced text overlay, and capturing final VR demo.

Week 9: Thesis Draft Feedback

Mar 30Professor Camila's written feedback on the full thesis draft: strengthen the memory layer, revise abstract framing, expand UX/UI discussion.

Week 8: Writing the Thesis

Mar 281-on-1 feedback, thesis draft revision: rewriting the abstract, articulating the 3DGS-memory connection, and merging light and sound into one design layer.