Week 2: From Feedback to First VR Tests

First VR space tests in the NYC apartment, the emergence of a location-triggered interaction concept, and readings on critique.

February 9, 2026

Overview

This week moved between two tracks. The practice side took a significant leap: two VR test sessions in a 3DGS-captured space of my first NYC apartment led to a clearer project direction, an interaction concept, and a set of concept sketches. The coursework side focused on readings about critique and feedback, prompting reflection on how I receive and process input at this stage.

Core Inquiry

Through conversation with friends and myself this week, and confirmed through the VR tests, a clearer through-line emerged:

The Through-line

-

I’m a lighting designer. I’ve been doing one thing: using space to transmit feeling to an audience.

-

But spatial experiences are fleeting. When the show ends, the light disappears, the atmosphere is gone. I can’t even go back and experience my own work.

-

3DGS and VR offer a new possibility:

- 3DGS can preserve space (not just images of it)

- VR can let people step into space (not just look at it)

-

This combination means spatial experience no longer has to disappear. It can be preserved, revisited, and shared.

Where “Memory” Fits

At first, “spatial memory” felt like a label rather than the essence. But after the VR tests, memory turned out to be central, not as an academic concept, but as what actually happens when you re-enter a space you lived in. Memories surfaced on their own, tied to specific locations. The space triggered them. Memory is the material the experience is made of: photos, videos, sounds, all anchored to the places where they happened.

What Gets Preserved

It won’t be the same experience. The original moment includes time, people, context, sound, all senses. You can’t preserve everything. But you can preserve a trace of the emotion or feeling. What’s preserved is a “frame” of time: a frozen moment, or a clip of a few seconds. The living, dynamic quality is gone. What remains is the feeling.

Even in live performance, the audience doesn’t feel “my” experience. They take in the light, the space, and extend it into their own imagination. The goal was never to transmit an identical experience. It’s about creating conditions for others to have their own.

The Medium: 3DGS + VR as a New Stage

The point is not to study the difference between live and virtual spatial experience. The point is to treat 3DGS + VR as a new “stage” and create spatial experiences within it. This is the same thing I’ve always done as a lighting designer. I didn’t study “how theater lighting differs from outdoor lighting.” I simply created in my medium and let the audience feel.

I am someone who uses space to transmit feeling. I used to work with light, in theaters. Now I’m exploring 3DGS + VR as a new venue, asking: what kind of spatial experience can this medium create?

Space as Text, Path as Structure

In lighting design, I never started from zero. There was always an anchor: a script, music, choreography. Now with 3DGS + VR, the anchor is the space itself and the life that happened inside it. The NYC apartment has its own “text”: the clips, sounds, and images I captured while living there. I don’t need to invent a narrative. The space already contains one. My job is to design how someone discovers it.

The structure comes from:

- Space itself as text - the captured place’s architecture, light, and atmosphere dictate the experience. Like site-specific performance, the venue becomes the script.

- The viewer’s path as choreography - I design how someone moves through the space, what they encounter, how the atmosphere shifts. A choreography of attention, not a story.

This mirrors exactly how I work with light:

- Read the space (stage, set, performer positions) → in VR: the 3DGS-captured environment

- Design the path of change (which areas light up, when, transitions) → in VR: viewer movement, atmosphere shifts, clip triggers

- Guide feeling (the audience follows) → in VR: the viewer experiences their own emotional journey

Current Decisions

- Space: Starting with the NYC apartment. It’s a space I lived in, scanned, and have extensive media from. Nankunshen remains a secondary option.

- Feeling: Exploration and discovery. The viewer moves through a frozen space and finds traces of life at specific locations.

- Form: Free exploration with location-triggered fragments (sound, video, photo). Framed by a build/dismantle arc (space appears, you explore, space disappears).

Open Questions

- When a spatial experience that was meant to disappear gets preserved, is it still the same experience?

- What is lost and what is gained in the translation from live to virtual?

- When someone else enters a space I preserved, do they feel my experience or their own?

- What are the “cues” in this new medium? In theater, lighting cues serve two functions: creating atmosphere and directing attention. The question isn’t whether to use relighting specifically, but what tools in VR can shift atmosphere and guide focus. Sound, clip triggers, particle transitions, spatial changes - these are all potential cues.

- How do location-triggered clips change the viewer’s relationship to the space? Do they see my life, or project their own?

VR Space Tests: My First NYC Apartment

Why This Space

The original plan was to use the Nankunshen Temple. But while thinking about the relationship between space and self, I realized: Nankunshen is a place I’ve been to, not a place I’ve lived in. To truly understand what it means to preserve and re-enter a spatial experience, I needed to start with a space where my life actually happened.

My first apartment in New York, the first place I ever lived on my own outside of Taiwan, felt like the right starting point. I had already scanned it with 3DGS. The data was there. The question was: what would happen when I stepped back inside?

Test 1: First Entry

Spent about 8 minutes inside the space. The space felt comfortable. Even after 8 minutes, I wanted to stay. Sound and light emerged as important factors: I could hear the real world, not the captured space, and felt real sunlight on my skin instead of the light in the scene.

Test 1 detailed observations

Texture and materiality. The 3DGS quality creates a feeling that’s hard to name. Not quite “memory,” but something with a transparency to it. Things aren’t fully solid, but you can still tell what they are: clothes, a cabinet, a fan, a chair. The 3DGS noise that drifts through the space adds to this quality.

The unclear things are the interesting ones. After about 5 minutes, I started wanting to look at details, especially the things that didn’t scan clearly. These blurred, ambiguous objects drew my attention more than the clear ones. I know what they are because I lived there, but I wonder what someone else would see.

Light and heat. In VR, I was feeling the real sunlight from outside, not the light in the captured space. Light doesn’t just produce vision, it produces heat. My skin feels the sun. That’s a layer of spatial experience that VR can’t transmit.

Sound matters. I could still hear the real-world sounds around me. If I could hear sounds that belonged to that space instead, it would change the experience significantly. Sound may be a critical element.

Technical discoveries:

- Sprite size as a lever: Small sprites = particle-like, abstract space. Large sprites = more solid, recognizable space. The transition between these two states is interesting, almost like moving from memory to presence.

- Relight works, but is performance-heavy when the space is fully solid. Possible approach: use relight in particle state, then expand to full form without relight.

- Scale is slightly off. The space feels a bit larger than reality.

Test 2: Revisited

Second session, documented through three simultaneous media (audio recording, Meta Quest screen capture, Insta360 third-person). The space felt deeply comfortable through familiarity. The 3DGS artifacts and glitches served a purpose: they remind you this is a frozen instant, not a recreation.

Test 2 detailed observations

Comfort through familiarity. The space feels comfortable in a way that’s hard to explain. Because I know this place so well, I can stay for a long time without wanting to leave. Even though nothing changes, nothing moves, it’s still a place I want to be in.

The broken parts tell the truth. The 3DGS artifacts, the holes and glitches in the space, serve a purpose. They remind me that this is a frozen moment. The space is virtual, but it feels real to me. The imperfection is what makes it honest: this is not a recreation, it’s a captured instant.

Technical adjustments:

- Scale correction: Adjusted height to match real-world proportions. Once correct, the overall scale immediately felt right.

- Color correction: Original render was washed out. Adjusted saturation, gamma, and contrast. The space now reads as a real place with real color.

Key Discovery: Memories Surface Through the Space

Being inside the space triggered something unexpected. Memories started surfacing on their own, not as abstract recollections, but as specific moments tied to specific locations within the room. This led me to go back through over a year of photos and videos taken in that apartment.

What I found were clips, traces of life scattered across different angles and moments: dancing in the middle of the room, the view from the window, quiet moments at the desk, VR testing sessions. Each clip belonged to a specific spot in the space. The space itself was organizing the memories.

Concept Sketches

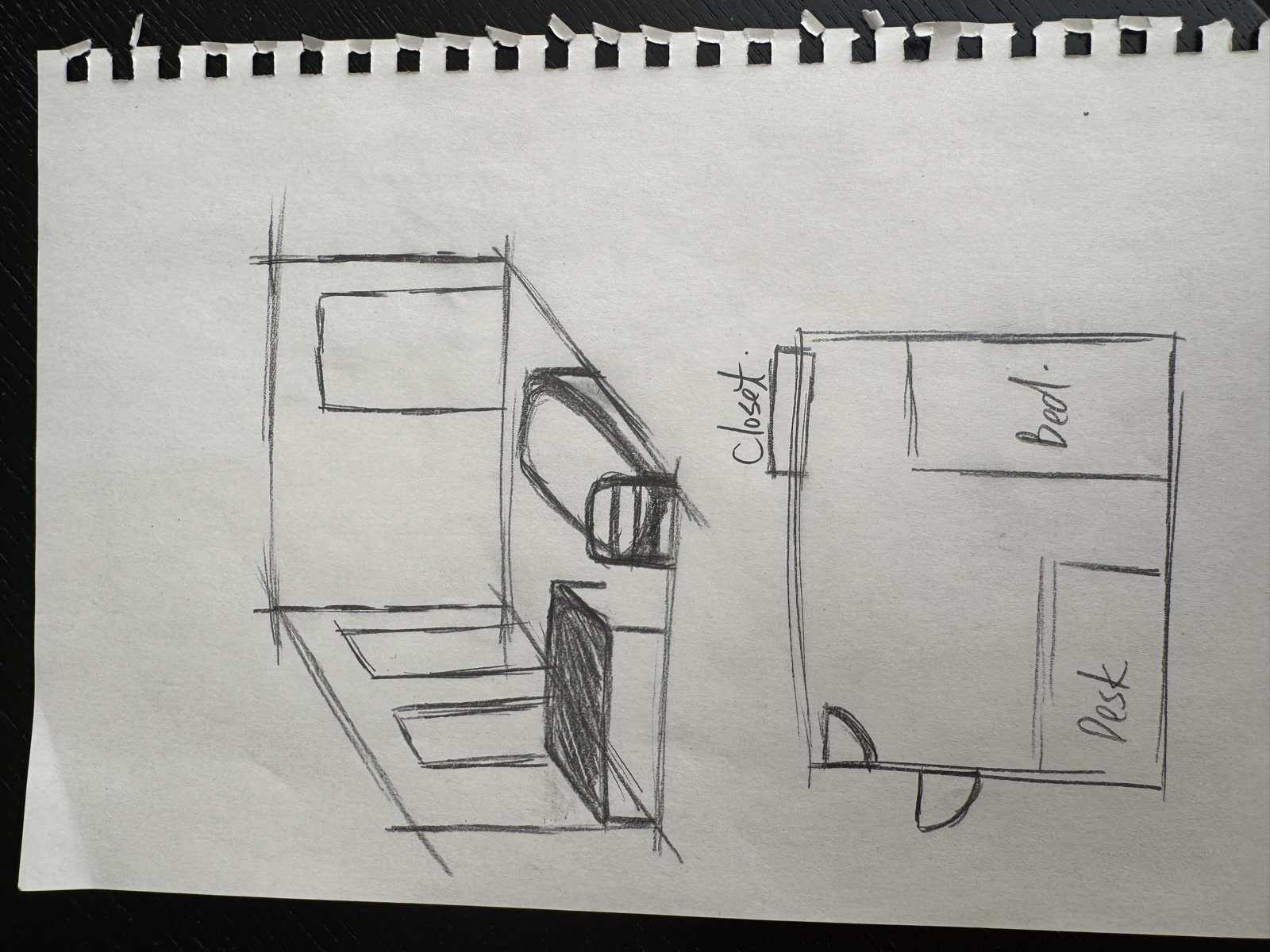

Two sketches emerged from this session:

Sketch 1: The space itself, drawn in both perspective view and top-down plan. The perspective shows the depth and spatial feeling of entering the room. The plan view maps the layout: closet, bed, desk, marking the key zones where life happened.

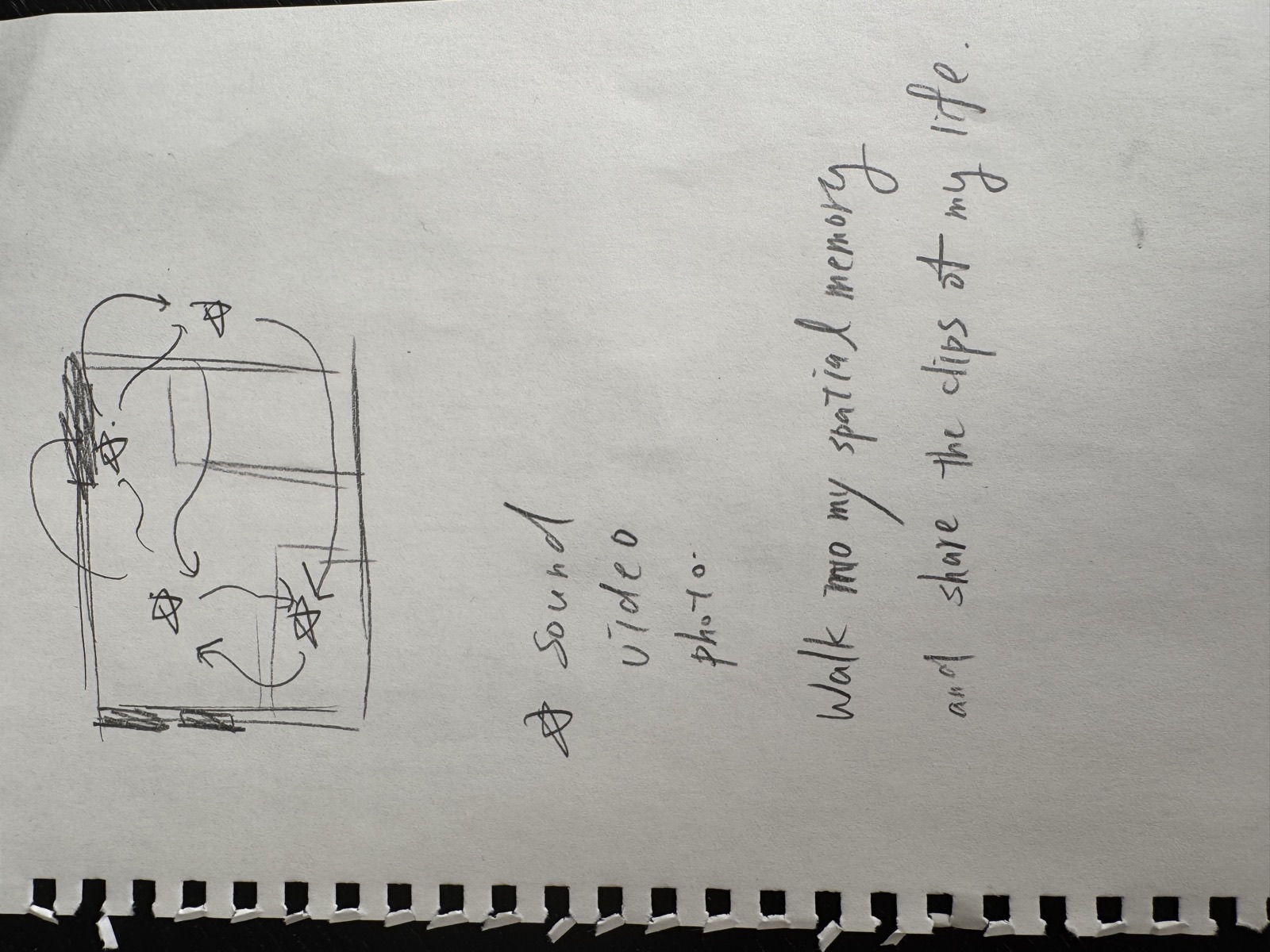

Sketch 2: The interaction concept. A viewer moves freely through the 3DGS space. At various locations (marked as nodes along the path), media fragments are triggered:

- Sound - ambient recordings from that spot (e.g., street noise near the window)

- Video - clips shot from that specific angle (e.g., dancing in the center of the room)

- Photo - still images tied to that location

The key phrase written on the sketch: “Walk into my spatial memory and share the clips of my life.”

Interaction Design: Location-Triggered Memory Fragments

The concept crystallized: instead of navigating between separate memory-spheres (as considered in Test 1), the interaction is location-triggered within the space itself.

- Each clip exists at the exact position and angle it was filmed. The trigger point in the virtual space corresponds to where the iPhone was when the clip was captured.

- Gaze alignment reveals the clip. The viewer’s headset has a forward vector. When it aligns with a trigger point’s position and direction, the clip appears. You have to stand where I stood and look where I looked.

- It feels like a crack in the frozen world. The static 3DGS space briefly comes alive at that specific perspective, showing a living moment that happened right there.

The 3DGS captures a frozen, static moment in time, but by embedding photos, videos, and sound at specific locations, the space comes alive. The viewer explores freely and discovers traces of life that once happened there. The space is the interface. Movement is the interaction. Memory is the content.

Narrative Structure: Build and Dismantle

A potential structure for the full experience:

- Opening: I recorded a timelapse of setting up this apartment when I first moved in. The space being built from nothing, furniture appearing, objects finding their places. This could be the entry point: watching the space come into existence.

- Experience: The viewer is inside the frozen 3DGS space, discovering memory fragments at different locations through movement.

- Closing: I also recorded the teardown when I moved out. The space being packed away, emptied, returned to nothing. The experience ends with disappearance.

This creates a full arc: creation, presence, disappearance. Which is exactly what happens to every space we live in.

Space Decay: The Space Dissolves as Memory Transfers

A concept that emerged from the 1-on-1 with Camila: as the viewer discovers more clips, the 3DGS space gradually decays. The particle count and size decrease, so the environment dissolves from high-fidelity to abstract particles.

The logic: once you’ve seen the clips and absorbed the memories, you don’t need the high-fidelity visual environment anymore. The feeling has already transferred to the viewer’s mind. The space can let go.

This connects the technical properties of 3DGS (controllable splat count and size) directly to the conceptual meaning of the work. The medium speaks to the message: memory is fleeting, spaces dissolve, what remains is feeling.

Questions for 1-on-1 (Camila)

Quick context: I’ve been testing my NYC apartment in VR (3DGS scan). When I entered, memories surfaced naturally, tied to specific locations. This led to an interaction concept: the viewer walks through the space, and location-triggered clips (video, photo, sound) from my life there appear at the spots where they happened. The space is the interface, movement is the interaction.

Questions:

- Direction check: Does “re-entering a lived space through 3DGS + VR, with location-triggered memory fragments” feel focused enough as a thesis direction?

- Demo Day (3/11): What level of completion would you expect? I’m planning to show the NYC apartment space with initial location-triggered clips and the build/dismantle arc.

Plan: Weeks 3-7 (Toward Demo Day)

Core principle: making is thinking. Don’t wait until the concept is fully formed to start. Entering the space IS the thinking process.

Week 3 (2/9 - 2/15)

- 1-on-1 with Camila: share VR test findings and interaction concept

- Collect and catalog all existing clips (photos, videos, audio) from the NYC apartment, organized by location

- Prototype location-triggered interaction: walk to a spot, fragment appears

- Edit documentation video (Meta Quest + Insta360 footage)

Week 4 (2/17) -Demo Prototypes Due

- Show: NYC apartment VR space with initial location-triggered clips working

- Doesn’t need to be finished. Needs to be an experienceable direction.

- Test build/dismantle arc (timelapse opening and closing)

Week 5 (2/23) -Annotated Paper Outline

- Refine interaction design (which clips at which locations, timing, transitions)

- Implement sound layer (ambient audio tied to locations)

- Paper outline: core argument + chapter structure

Week 6-7 (3/2 - 3/11) -Demo Day

- Integrated prototype: enter space, explore, discover clips, build/dismantle arc

- Prepare presentation

Readings & Feedback Reflection

Reading 1: Eva Sutton, “Conversation: Critique” (The Art of Critical Making, 2013)

A roundtable conversation among five RISD artists, designers, and faculty, each visualizing the critique process through drawings.

Key takeaways:

- Description before judgment. Christina Bertoni’s matrix (Form, Content, Material, Process) gives a framework for articulating what you see before deciding what you think. Critique starts with observation, not opinion.

- The V-shaped distillation. Elliott Romano describes critique as a funnel: peers ask questions, connections surface, and the work either confirms or challenges your original intent. Sometimes putting work up for critique is the first time you truly see it.

- Hold the comments. Multiple participants emphasized not reacting immediately. Let feedback sit for a couple of days. The ideas that keep coming back are the ones that matter.

- The teacher becomes invisible. Eva Sutton and Daniel Hewett both describe the ideal critique as one where the facilitator steps back and ideas emerge from the maker. The work is a vehicle for understanding potential, not just for evaluation.

- Ideas crystallize over time. Sutton’s cloud diagram shows how random ideas, through repeated conversation, form connections, cluster, prune, and eventually reveal a generative core idea.

Reading 2: Sheila Heen & Douglas Stone, “Find the Coaching in Criticism” (Harvard Business Review, 2014)

Focuses on the receiving side of feedback. Core idea: improving the skills of the giver won’t help if the receiver can’t absorb what’s said.

Three triggers that block feedback:

- Truth triggers - the content feels wrong or unhelpful

- Relationship triggers - colored by who delivers it

- Identity triggers - threatens your sense of self

Six steps to receive better:

- Know your tendencies

- Disentangle the “what” from the “who”

- Sort toward coaching (hear advice, not judgment)

- Unpack the feedback (where is it coming from? where is it going?)

- Ask for just one thing

- Engage in small experiments

Reflection: How I Receive Feedback

At this stage, I don’t need evaluation (“is this good?”) or technical advice (“use this tool”). What I need is dialogue that helps me articulate what I’m already thinking but haven’t found words for yet. The feedback I’m looking for is: ask me questions, not give me answers.

My instinct when receiving feedback is to defend and explain. Not because I think the other person is wrong, but because I feel my reasoning hasn’t been fully heard yet. As a lighting designer, every decision has layers of thinking behind it, but others only see the result. When someone questions that result, I want to open up the process so they can see why.

This means my primary trigger is identity-based: when feedback conflicts with my understanding or cognition, I feel the need to bridge that gap. On the other hand, when feedback is simply off-base (truth trigger), I tend to disengage and zone out rather than argue.

Time changes everything. After a few days, I can step back and see that the other person was thinking from a different starting point. Reading 2’s “unpack the feedback” is something I naturally do, but I need time for it. Reading 1’s advice to “hold the comments for a couple of days” fits my pattern exactly: my first reaction is to defend, but my real understanding comes later.

What I want to be mindful of this semester: recognizing that initial urge to explain, and giving myself permission to just listen first.

Next Steps

- Collect and catalog all existing clips (photos, videos, audio) from the NYC apartment, organized by location

- Prototype location-triggered interaction (walk to spot, fragment appears)

- Test embedding video/photo/sound into the 3DGS VR space

- Edit Insta360 + Meta Quest footage into documentation video

- Write initial “spatial text” based on the NYC apartment experience

- Rough path design (which locations, what media, what order)

- Prepare questions for Camila 1-on-1

Week 2 | 2026-02-03 ~ 2026-02-09