Week 3: From Concept to Prototype

Translating the interaction concept into a working prototype. Story frame selection, space decay implementation, and Demo Day prep.

February 16, 2026

Overview

Week 2 established the direction: a 3DGS-captured NYC apartment in VR, with location-triggered memory fragments and a space decay mechanic. The 1-on-1 confirmed the scope and pushed toward experience design. Week 3 is about making it real.

Goals This Week

From the 1-on-1 feedback and current plan:

- Collect and catalog all existing clips (photos, videos, audio) from the NYC apartment, organized by location

- Decide on story frames: which memories for which locations

- Prototype gaze-triggered interaction (details below)

- Test space decay mechanic (splat count/size decrease as clips are discovered)

- Edit documentation video (Meta Quest + Insta360 footage from VR Test 2)

- Think about the full experience design: what happens before and after the headset

Interaction Mechanic: Gaze-Triggered Clips

Memories emerge from the objects and surfaces in the space that carry them. The desk held hundreds of meals over the year, so food photos surface when the viewer looks down at it. The window framed the street across seasons, so the view appears when the viewer looks out. Each object in the room is a vessel for the life that happened around it.

How it works:

- Each clip has a position (where the memory happened) and an orientation (the natural viewing angle for that location)

- Two conditions trigger the reveal:

- Proximity: the viewer walks close to the memory’s location

- Gaze direction: the viewer looks toward the clip (e.g., looking down at the desk, looking out the window)

- When both conditions are met, the clip gradually reveals itself

What it feels like: You’re walking through a frozen, static world. When you approach a familiar spot and look the way you naturally would, a memory surfaces right where it happened. The static world briefly comes alive at that specific location.

You discover them by being present in the same spot and looking naturally at where life happened.

Technical Implementation

For full Blueprint specs, material setup, and implementation details, see the Tech Dev Log.

Summary of key systems built:

- BP_Clip: gaze-triggered memory fragment actor with proximity + direction detection, progressive reveal via

openFactor = distFactor * angleFactor, smooth lerp, photo/video support, audio via MediaSoundComponent - BP_ExperienceManager (planned): tracks discovery count, drives 3DGS space decay based on discoveredRatio

- M_Clip material: shared for photo and video, supports alpha-masked textures for transparent backgrounds

- Sun/sky system: Directional Light rotation driven by Timeline, with Sky_Sphere Refresh Material

Story Frame Selection

Core Insight: Repetition, Not Events

The memory collection is not about “what happened.” It’s about “how days were spent.” ~40% of all photos are overhead shots of meals at the same desk, same angle, different days. The window shows the same street across seasons. The rooftop appears at golden hour, deep night, and covered in snow. The story is daily ritual, not dramatic events.

Memory Placement by Location

| Location | Memories (cycle on repeat visits) | Trigger | Medium |

|---|---|---|---|

| Desk (looking down) | Meals cycling: lu rou fan, jian jiao, salmon fried rice, dan bing + soy milk, miso soup, curry rice… | Proximity + downward gaze | Photos |

| Window (looking out) | Seasonal progression: summer street, golden hour, sunset clouds, snowy street, night neon | Proximity + forward gaze toward window | Photos + videos |

| Closet (looking toward) | Clothes, personal items, traces of daily life | Proximity + gaze toward closet | Photos |

Design Decision: No Guidance

- No arrows, no UI hints, no prompts

- Room is small enough that curiosity drives exploration naturally

- First trigger (desk) has a wider activation angle so it’s easy to discover

- Once the viewer triggers one memory, they understand the mechanic

Experience Design

Full Experience Arc

Black → Build → Freeze → Discover → Repeat → Decay → Empty → Black1. Black. Headset on. Nothing.

2. Build. Bed assembly timelapse plays as a floating image in the dark. Someone building a bed in an empty room. Timelapse ends, image dissolves.

3. Freeze. 3DGS particles materialize around the viewer. The apartment forms: bed, desk, window, kitchen. Everything is frozen. No sound. Like standing inside a photograph.

4. Discover. Viewer walks to the desk, looks down. A bowl of rice appears. Turns away, it fades. Walks to the window, looks out. Summer street. The viewer learns: approach a familiar spot, look naturally at where life happened, and the memory surfaces.

5. Repeat. Viewer returns to the desk. Different meal. Returns again. Another one. The same position, but time is flowing through it. The window shifts from summer to sunset to snow. The rooftop goes from golden hour to deep night.

6. Decay. Each repeat visit loosens the 3DGS at that location. Desk edges dissolve. Window frame goes transparent. The bed blurs. The space doesn’t need to hold its form anymore. The memories have already moved from the room into the viewer. The form was just a vessel.

7. Empty. The space is mostly particles now. The last visit to the desk reveals nothing. Memories exhausted. Then: the move-out video plays. Empty room. Bare floor. A farewell to the space.

8. Black. End.

Arc Summary

Empty room → Built → Lived in → Remembered → Let go → Empty room

The beginning and end are both empty rooms, but they mean completely different things.

Video Placement

54 videos analyzed, spanning Aug 2024 - Aug 2025. Core principle: most memories are static (photos). Only a few moments are alive (videos). This makes video moments hit harder.

| Placement | Video | Why |

|---|---|---|

| Opening | IMG_0800/0802 - move-in day, empty room, unpacking | Story begins |

| Window (final visit) | IMG_3633 - rain on window glass at sunset, 13s | Last window memory is alive: rain, light, motion. The shift from static to dynamic stops the viewer. |

| Kitchen | IMG_0904 - Tatung rice cooker close-up | Not about “cooking what” but “where I come from.” Taiwanese cultural marker. |

| Sky (final visit) | IMG_1016 - peak golden hour on rooftop | Photo cycle ends, last visit is a moving sunset. Sky comes alive. |

| Ending | Move-out farewell video | Story ends |

Other notable footage for potential use:

- IMG_1012-1019: 8-clip rooftop sunset sequence (same position, sun high to set), can stitch into timelapse

- IMG_3583: 16-min desk/window sunset (likely timelapse, 1.79GB)

- IMG_2760: Snow-covered rooftop (same spot as sunset, seasonal transformation)

- IMG_1747: Person wearing Meta Quest in the same bedroom (meta: VR within VR)

- IMG_2928: 4-min full room walkthrough (spatial reference)

- IMG_0001/0002: 4K apartment walkthroughs (photogrammetry reference)

Will the Viewer Care?

Biggest concern: without context, the viewer is just walking into a stranger’s room looking at food photos. They don’t know who lived here, why it matters, or that this place doesn’t exist anymore. The emotional weight doesn’t transfer.

But adding narration or text overlays turns it into a documentary. Not what I want.

A few directions I’m thinking about:

- Give context before the headset. Doesn’t need much. A few printed photos on the wall, or just one sentence: “This is the room I lived in for a year in New York.” Knowing it’s real is enough.

- Sound. The frozen space shouldn’t be completely silent. Low ambient city noise (traffic, AC hum). When memories trigger, the sound shifts with them: oil sizzling, street sounds, rain on glass. It doesn’t need to tell a story, but the space needs to feel real.

- Decay does the guiding. The viewer doesn’t need to be told what to do. When they notice “things change when I come back” and “this place is disappearing,” curiosity takes over.

- Not everyone needs to get it. Some people will find it boring. That’s fine. The people who slow down will get something out of it.

The most critical one is #1. One sentence + sound is enough.

Open Questions

- Is it purely VR, or does the physical space before/after the headset matter?

- Should there be ambient sound (city hum, distant traffic) in the frozen space, or pure silence?

- Pacing: how many repeat visits before decay becomes noticeable?

- Should the viewer be able to discover ALL memories, or does the space collapse before that’s possible (you can never recover everything)?

- Depth estimation for subtle parallax on some photos (window/rooftop scenes)? Or keep all strictly flat?

- Hand interaction: after a memory appears, the viewer can reach out and touch it. Touching makes it disappear. Could drive the decay (viewer causes it) and the cycling (touch removes one, next visit shows a different one). Requires Meta Quest hand tracking.

Concept & Experience

A VR experience built inside a 3DGS scan of my Brooklyn apartment. The viewer enters a frozen, static space and discovers memory fragments (photos, videos, sound) by walking to familiar spots and looking naturally at the objects that carry them. The desk held a year of meals, so food photos surface when you look down. The window framed the street across seasons, so the view appears when you look out. Each object is a vessel for the life that happened around it.

As more memories are discovered, the space gradually dissolves. 3DGS particles loosen, edges fade, surfaces blur. The space doesn’t need to hold its form forever. Memory lives inside us as feeling, not objects. The experience moves from a fully formed apartment to abstract particles to emptiness.

Empty → Build → Live → Freeze → Discover ⟲ → Let go → EmptyThe beginning and the end are both empty rooms, but they mean completely different things.

Progress Log

Prototype Documentation

0. 3DGS Source Scan

The original iPhone video used to generate the 3DGS scene via Luma AI. A 49-second walkthrough of the Brooklyn apartment.

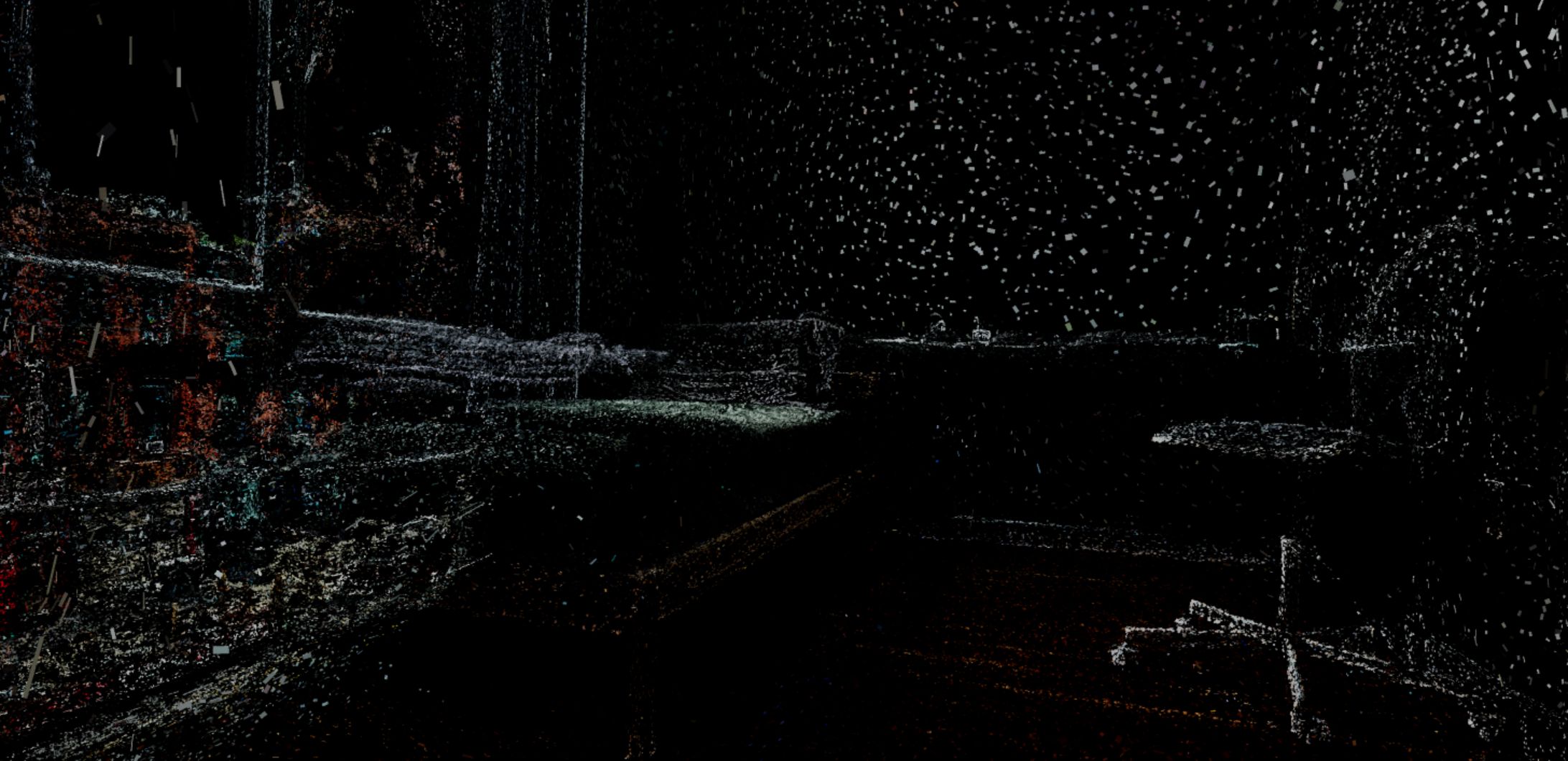

1. 3DGS (Rendered in Unreal Engine)

The NYC apartment rendered as a 3DGS scene in Unreal Engine. Multiple angles captured: bedroom with bed/desk/chair, window looking out to Brooklyn street, closet, bathroom door. The 3DGS reconstruction has a painterly quality with visible splat artifacts at edges, but the overall spatial feeling is convincing.

2. BP_Clip Testing & Iteration

First test: placed multiple food photos on the desk area simultaneously. The desk has the most memory density (20+ overhead meal shots taken over the year), so it was the natural starting point for BP_Clip interaction testing.

Iteration: discovered that alpha-masked (background-removed) photos integrate much better with the 3DGS environment than rectangular frames. Created a composite image of all 20 food photos with transparent backgrounds. When placed on the desk surface, they look like they belong in the space rather than floating as separate UI elements.

3. Particle Decay States

Captured the 3DGS at different splat count/size levels to visualize the decay progression:

- Full fidelity (5): Room is clearly recognizable. Bed, window, curtains, wood floor all visible with detail.

- Mid decay (3-4): Room structure holds but surfaces become impressionistic. Edges dissolve, textures blur.

- Heavy decay (2): Chunky splats. Room is barely recognizable as a room. Shapes are abstract.

- Near empty (0-1): Almost completely dark. Only sparse edge particles remain, like stars in a void. The room has become a memory of a memory.

This maps directly to the experience arc: as the viewer discovers more clips, the space progresses from full fidelity toward particle abstraction.

4. VR Test Recording

First-person VR footage captured from Meta Quest headset inside the 3DGS apartment, combined with Insta360 360-degree capture of the same session.

Sun/Sky Time Progression (in progress)

Directional Light pitch rotation controls sun position. Sky_Sphere follows with Refresh Material calls. Plan: Timeline driving rotation, connectable to discovery progress. See Tech Dev Log for details.

Next Week: Demo Prototypes Due (2/17)

Target: NYC apartment VR space with at least one location-triggered clip working. Bring headset for people to try.

Week 3 | 2026-02-10 ~ 2026-02-16